Table of Contents

Letter from the Journal

Over the past month, we have spent a work heavy time to welcome 37 new junior researchers into our team. We also took the time to restructure the team into a more efficient and effective workflow, welcoming two new senior researchers, Roaa Elfishawy and Radwa El-ashwal. To train those junior researchers, we planned a 3-week filled training to teach the junior researchers the basics of research, brainstorming, and writing the actual paper. Over the past 3 weeks, the Editor-In-Chief and the managing researchers conducted 3 webinars, talking about the research process, writing process and revising and proofreading the paper. All the junior researchers were required to conduct a group project as they were matched with fellow researchers with alike interests and had to complete a group article in the 3 weeks. In this period, each group was mentored by a senior researcher where the senior conducted 6 group sessions helping guide them through the process of writing their first review article. On 17th of September, there was a presentation day, in which each group had to present a 10-minute presentation to explain their project thoroughly to the audience, and to a set of preselected judges. The projects presented were all of high caliber and impressed the judges. By the end of the training, the best project was awarded, and 3 runners-up were given awards. These groups have shown great dedication and enthusiasm for research and showcased that through their research project. Even more, they showed spectacular presentation skills, exhibiting their projects and showing off their work in 10 minutes. Therefore, in this issue, we represent all the group projects created throughout the training, because we are proud of the endless effort that were done to write each and every article. We thank all the senior researchers for their tiring work as mentors, and all those who have helped make this issue possible. Best Regards,

Youth Science Journal Community

The artificial pancreas: the development of closed-loop algorithms in the present

Abstract About 422 million people worldwide have diabetes. It has no cure so far. But when it comes to biomedical engineering, we can see a glimmer of hope. Now, you are not committed to taking continuous insulin doses because your artificial pancreas will do that for you. The artificial pancreas facilitates the patient's disease journey both psychologically and physically. It is equipped with a blood glucose monitor and an insulin pump, making it like a natural pancreas in its functionality. But behind every invention that is beneficial to humanity are complications and sometimes algorithms.

I. Introduction

According to WHO, diabetes affects around 422 million

people globally. Diabetes is directly responsible for 1.6

million deaths per year. Over the last few decades, both

the number of cases and the prevalence of diabetes have

significantly increased. It causes severe problems such as

blindness, kidney failure, heart attacks, stroke, and

lower limbamputation

II. The Incurable Disease

i. Brief about the Pancreas

The pancreas is a pear-shaped vital part of the digestive

system and is known as a mixed gland due to having both

exocrine and endocrine functions. The endocrine portion

consists of a group of different types of cells (islets of

Langerhans). The islets of Langerhans cells are three

types, Alpha, Beta, and Delta. Each of these cells

secretes a specific hormone. For instance, Alpha cells

secrete glucagon that raises blood glucose levels raises

blood glucose levels. Besides, Beta cells secrete insulin

that regulates sugar metabolism and maintains normal sugar

levels in the blood.

ii. Type 1 Diabetes

When you eat carbohydrates, chemicals in your small

intestine break them down into single sugar molecules

called glucose. The small intestine absorbs glucose. Then,

the glucose starts to transfer into the bloodstream. When

the bloodstream reaches your pancreas, beta cells detect

the rising glucose levels and release insulin into your

bloodstream to decrease glucose levels and keep your blood

glucose in a normal range.

III. The Glimmer of hope

i. Artificial Pancreas

So, if you are a type 1 diabetes case, your objective is

to maintain a healthy blood glucose level by inserting the

dosages of insulin required to lessen the blood glucose

level. Thus, the artificial pancreas plays a role in this

series of functions by making it continuously

ii. Types of Artificial Pancreas

So, using an artificial pancreas (AP) is essential for people who experience diabetes, especially Type 1 Diabetes. However, many insulin delivery systems exist, each with its mechanisms, algorithms, and certain returns. When discussing kinds of artificial pancreases, there are mainly three types according to the official UK site for diabetes.

- Bionic Pancreas: It works like the CLS by pumps to deliver insulin and glucagon (which raises glucose in the blood). The pumps relate to an app to provide coordination between the pancreas devices.

- Implanted artificial pancreas: It is an insulin delivery device that features a gel that can detect changes in glucose levels by enabling a high insulin release rate when the glucose level increases and vice versa.

- Closed-Loop System (CLS):

IV. Closed-Loop Systems: A Close Look

i. Historical Appearance

The closed-Loop glucose system idea is not new. The

concept was introduced in the 1960s

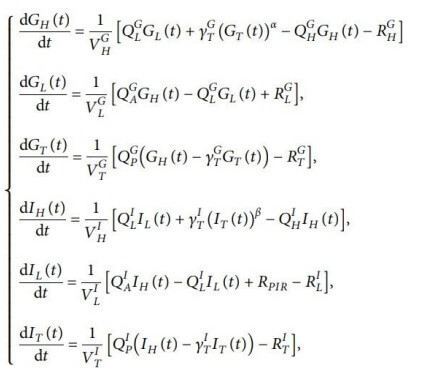

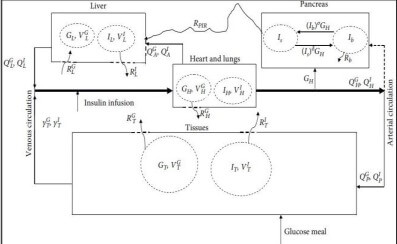

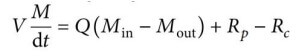

ii. The Algorithms in CLS: Natural introduction

- V: Compartment volume

- M: concentration

- Q: blood flow rate

- Rp, Rc: Metabolic production and consumption rates

While developing the artificial pancreas, scientists and

engineers certainly were inspired by the systems of

equations modeling the natural pancreas secretions

behavior. However, it is impossible to use these systems

because they model the “behavior” of the pancreas that

they are not instructions that can be followed. Also,

artificial pancreas systems cannot always measure the

concentrations of the hormones and glucose accurately and

instantly. Subsequently, prediction models and

error-correcting algorithms are necessary to supply the

human body with stable glucose rates, and that’s where the

importance of the control algorithms comes

iii. The Main Algorithms in the CLS

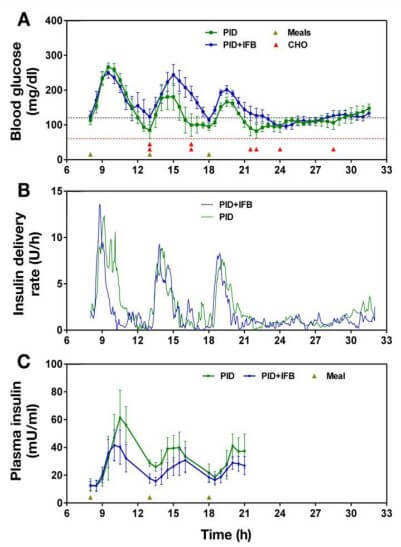

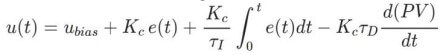

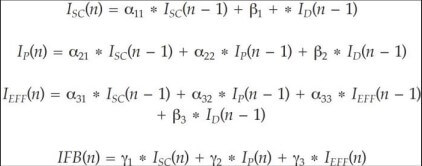

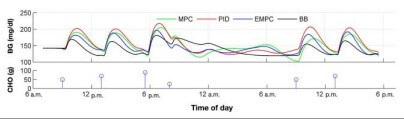

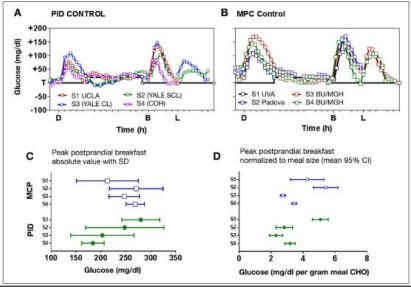

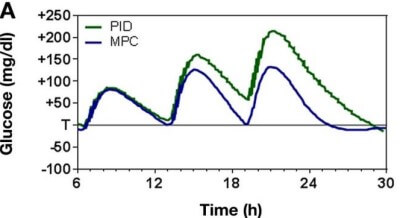

Many algorithms are frequently used in the CLS control devices. In addition, algorithms can be implemented in different ways. They are just principles. There are three main types of algorithms, Model Predictive Control, Proportional–Integral- Derivative, and Fuzzy Logic Control: Here, we will present these main strategies and algorithms involved in technology.

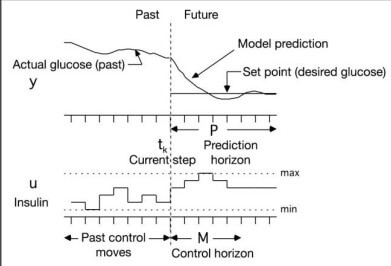

- Model Predictive Control (MPC):

- Time in the desired range: 94.83% vs. 68.2%.

- Time in hypoglycemia: 1.25% vs. 11.9%.

- Overnight Time in the desired range: 89.4% vs. 85.0%

-

Time-in-hypo: 0.00% vs. 8.19%, where the first

percentage is for the CLS and the second one for the

OLS

[19] . - Proportional-Integral-Derivative (PID):

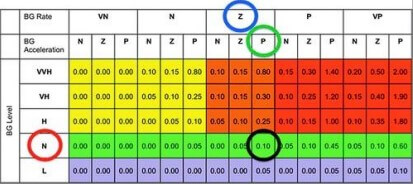

- Fuzzy Logic Controller (FLC): Fuzzy Logic (FL) is an algorithm that isfundamentally distinct from other algorithms. Otheralgorithms use precisely formulated mathematicalmodels for calculations to make decisions. FLdepends on linguistic rules and human experience todetermine the solution to a problem. The differencebetween traditional model-based algorithms and FLis that FL assigns values (probabilities) between 0and 1 for inputs. Giving an output according toprobability instead of using only false “0” and true“1” in what is known as “Boolean Logic” For instance, if the range of normal glucose levelswas set to be (70 – 180) mg/dl, a Boolean Logicalgorithm would treat 182 mg/dl as being strictlyhigh and would take decisions like that for a glucoselevel of 270 mg/dl. FL is a more “natural” algorithmsince it usually operates like our thinking, althoughone drawback is that it requires a lot of data and predefined rules to work

The parameters used in the system of ODEs can be fixed

or adaptive, while adaptive values may sound promising.

They must be applied with care because they may result

in unstable systems.

Correcting the model according to the measurements

differs from predictions. It treats the difference

between the measured output and the model prediction at

the current step as a constant. The correction happened

only after the prediction. However, better approaches

that make smart but more complex corrections like the

Kalman filter.

V. Comparison, Which Approach is Better?

MPC ODEs can be very complicated or relativelysimple. The

gain constant in PID can have differentvalues.

Modifications like IFB and FMPD (fadingmemory proportional

derivative) can be executed tomodify the behavior of

PID.

On the other hand, FL depends mainly on theexperience of

the person defining the operationrules. Boquete, a strong

MPC proponent noticedthat and mentioned it explicitly at

the end of hisdiscussion: “I also agree that it is very difficult,even in

simulation studies, to have a validcomparison of

different algorithms. One way oranother, an algorithm

must be tuned based on someperformance criterion, so if

particular MPC andPID algorithms are tuned with

different criteria,then there is no good way to compare

them.”

VI. Results

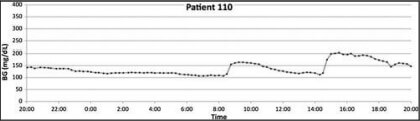

According to a 6-month trial by the National Institute of

Diabetes and Digestive and Kidney Diseases. It was testing

the CLS on the Diabetes type 1 patients for targeting

glycemic range.

During the trial, patients who utilized the closed-loop

device had lower glycated hemoglobin levels. The

closed-loop system had beneficial glycemic effects during

the day and at night, with the latter being more

noticeable in the second half of the night. The glycemic

advantages of closed-loop management were observed in the

first month of the study and lasted for six months. The

study population included both insulin pump users and

injectable insulin users spanning a wide age range (14 to

71 years) and baseline range of glycated hemoglobin levels

(5.4 to 10.6 percent), with similar results across these

and other baseline features of the cohort.

Patients had to be at least 14 years old and have a

clinical diagnosis of type 1 diabetes; they also had to

have been treated with insulin for at least a year using a

pump or several daily injections, with no restriction on

the glycated hemoglobin level. The experiment included a

2-to-8-week run-in phase (the length of which depended on

whether the case had before used a pump or constant

glucose monitor) to gather baseline data and teach

patients how to use the devices.

The use of a closed-loop system was linked to a higher

percentage of time spent in a target glycemic range than

the use of a sensor-augmented insulin pump in this 6-month

experiment, including type 1 diabetes patients.

VII. Future Research

It is important to declare that a few problems and gaps

in the development of the CLS were not in the previous

studies and research. We will address these problems here

and introduce probable solutions for them:

Inconsistent or ambiguous algorithm standards: a great

portion of the studies not mentioned in detail. The

implementation of the algorithm's methods in their

research design and methods section or even adhere to them

in some cases as the control algorithm may differ from the

simulation algorithm in a simulation study. Examples of

standards that should be well acquainted with are the

applied parameters tuning, the algorithm version used, the

number of steps, and their duration in the control and

prediction horizons in MPC. This consistency problem

causes inaccurate information and sometimes

underestimating an algorithm due to unfair methodology.

Clear information about algorithms and precise methodology

documentation should be a necessity in any study that

includes experiments whether they are on real subjects or

simulations.

Not enough / inadequate data: A major problem with

regards to the research done on AP till now is the eminent

lack of experiments on real subjects. Most of the studies

of AP made so far have included 7 – 10 persons at maximum.

In addition, the scarcity of experiments. It might be

surprising that the first outpatient study made based on

MPC was reported in 2014. Absence of diversity in the

studies made because they are usually made in North

America on adult North American patients. Diversity in

characteristics of subjects plays an important role as a

factor to ensure that a medical procedure is suitable and

beneficial for a wide range of people and conditions.

Unfortunately, a device like AP may not work well and

provide desired stable glucose levels for Asian

children.

Diverting attention to AP studies, increasing their

number as well as widening the health conditions, and

geographical range of them will lead to the accuracy of

the information available.

Possible improvements for the algorithms:

Although

the algorithms used in the AP CLS have greatly improved

and got better since the CLS's first introduction in 1964,

they are still not close to being perfect, and there is

still definitely a place for improvement.

Taking PID as an example, a deviation measure with its

specific experimentally obtained parameter can be

introduced to the algorithm along with IFB to favor

staying in the target range overachieving smooth glucose

changes to avoid nocturnal hypoglycemia and hyperglycemia.

The added existence of the IFB modification is essential

to provide data about the insulin delivery history and

make predictions for the insulin and glucose levels to

allow the deviation measure to function efficiently.

Research on introducing a deviation measure may provide

valuable insight into the potential of this

modification.

A possible suggestion concerning FLC is developing a

mobile application that connects the FLC database in the

AP with the clinician computer and provides regular data

about the insulin and glucose levels history. This app can

allow the clinician to communicate with the patient and

keep an eye on his condition wirelessly. The interesting

part is that FLC is highly customizable and operates like

ours using linguistic rules. The app could achieve a

special benefit as it allows the clinician to monitor the

patient status and modify the operation rules of the FLC

through his computer according to the patient individual

statistics and update them to be adjusted to the patient's

needs. Such a mobile app can increase the efficiency of

the algorithm and facilitate the communications between

the clinician and the patient and between the clinician

and the FLC. The app may attain pleasing and useful

results.

VIII. Conclusion

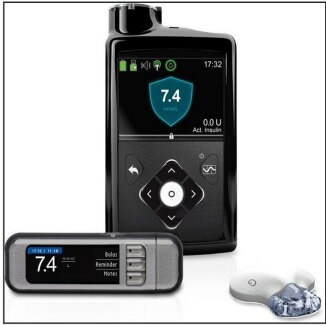

For years, scientists have studied the natural pancreas and have been able to mathematically simulate its function using various methodologies and complexity levels. The mathematical model created by Banzi and coauthors is one of the dependable and not overly complicated models. Scientists' primary objective in developing the algorithms of the closed-loop system was to create an artificial pancreas that performs similarly to a natural one. Because of the high potential benefits of the closed-loop system, we decided to review it. Moreover, it has been the subject of several studies and tests. It consists of an insulin pump (the small cuboid-like apparatus) that may have the control algorithm inserted, a GCMS (the pieces at the bottom-right), and a communication device that allows doctors and experts to monitor the device and patient's condition. However, the development of algorithms and the addition of complexity to increase CLS performance in actual settings was and continues to be a key component of CLS and AP technologies. According to the volunteers that used the CLS, they completely forgot that they have diabetes, and the results were unexpected on the other hand, CLS has few problems and gaps, so we reconsidered these problems and find solutions to increase the efficiency of the project. AP aims to change the life of the patients mentally, psychologically, socially to make about half a million people get their work done without any suffering.

IX. References

The advancement of gene therapy conjures up the hopes of treating psychiatric disorders

Abstract Gene therapy is a potential treatment of many incurable, lethal, and chronic diseases as psychiatric disorders. Competing with other kinds of medications, gene therapy -also known as gene alteration- has been seen as a prospective therapeutic solution as genetics contributes intensively to the origin of many disorders. The emergence of gene therapy was accompanied by controversial arguments about its unknown side effects and effectiveness that impeded the development of gene therapy. After a lot of experiments on mice and deliberate research in the world of genomics, the first genetic therapy on the human body was conducted on a young girl who was diagnosed with a rare genetic disorder in 1990. The treatment went successfully, and it has spurred the implementation of gene therapy in numerous health issues. Among many diseases that can be treated using gene therapy, psychiatric disorders are the most prominent as they are profoundly affected by gene defects. Depression, Bipolar, Alzheimer’s, and OCD are examples of mental issues caused by defections in certain genes.

I. Introduction

For therapeutic purposes, genetics would be an effective

solution. Gene therapy is the modification or manipulation

of the expression of a certain kind of genes inside the

cell body to change its biological behaviors to cure a

specified disorder. But what makes biologists think of

using genetics instead of using familiar medications such

as pharmaceuticals?

The reason is that genetics have a profound, direct

contribution to most diseases -genes can mutate during the

growth of the body, and genes could be missed from the

moment of birth also. Such genetic problems could disrupt

a chronic health issue.

Gene therapy presents a promising attempt to treat

different diseases such as leukemia, heart diseases, and

diabetes. Also, gene therapy could be used to improve the

immunity of a body during its fighting with immune

destructive disorders like HIV

II. Gene Therapy Overview

Gene therapy has erupted from the late 1960s and the beginning 1970s when the science of genetics was revolutionized. In 1972, Two genomics scientists Theodore Friedmann and Richard Roblin issued a paper named “A Gene therapy for human genetic disorders?”; their paper was pointing out that a genetic treatment is a potential cure for patients with incurable genetic disorders by merging a DNA sequence into a patient's cells. The paper encountered much disapproval as the side effects of gene therapy were unknown at that time. However, after deliberate research and experiments, in 1990 the first gene therapy trial on the human body went successfully. The therapy was conducted on a young girl who was diagnosed with a deficiency of an enzyme called ADA, making her immune system vulnerable, and any weak infection could have killed her. Fortunately, that trail has paved the way for gene therapy to flourish as a treatment among other types of medications.

III. Gene therapy vs genetic engineering

A renowned misconception is that people think that gene

therapy and genetic engineering are synonymous;

nonetheless, they are different technologies. Gene therapy

is a technique that aims to alter the DNA sequence inside

malfunction cells to cure genetic defects. On the other

hand, Gene engineering is used to modify the

characteristics of a certain gene to enhance its

biological functions to be abnormal. Genetically Modified

Organisms are an obvious example of genetic engineering

products. For illustration, the advancement of

biotechnological techniques enabled scientists to develop

a kind of modified cultivated products with certain

abilities to cope with human needs such as a plant with

less need for fertilizers and more prolific outcomes

IV. Gene therapy stages

You might have imagined gene therapy as the injection of the patient with a syringe that has a gene to simply substitute the flawed gene inside the cell. Mainly that thought is right, however, the process is not that easy.

V. Types of genetic therapy

There are two types of genetic therapy: somatic therapy

and germline therapy. Somatic therapy is inserting the new

gene in a somatic cell (cells that do not produce sperms

or eggs). Somatic therapy does not ensure that the disease

will not appear in successive generations, and it requires

the patient to take it several times as its effect does

not last long. On the other hand, germline therapy targets

the reproductive cells which produce gametes that later

develop into an embryo. Germline therapy occurs one time

in life. It happens either in pre-embryo to treat genetic

defects or it is used to treat a flawed adult sperm or egg

before entering the fertilization process

VI. Genetic therapy and psychiatric disorders

The productivity of individuals within their society is

determined by their mental conditions. If they suffer from

a mental issue, they will not behave well in their

routines. Psychiatric disorders are a psychological and

behavioral defect that causes disturbance in the

functions, feelings, and perceptions of the brain. Ranging

from sleep troubles to Alzheimer's, psychiatric disorders

have many different forms, such as Depression,

Schizophrenia, Bipolar disorders, and development

disorders like ADHD

VII. What makes gene therapy the expected future approach for most of the psychiatric disorders?

Despite the continuous research and advances in medical

treatment methods for various psychiatric conditions, a

large number of patients remain unresponsive to current

approaches. Development in human functional neuroimaging

has helped scientists identify specific targets within

dysfunctional brain networks that may cause various

psychiatric disorders. Consequently, deep brain

stimulation trials for refractory depression have shown

promise. With the procedure and targets being advanced,

that helped scientists use similar techniques to deliver

biological agents such as gene therapy.

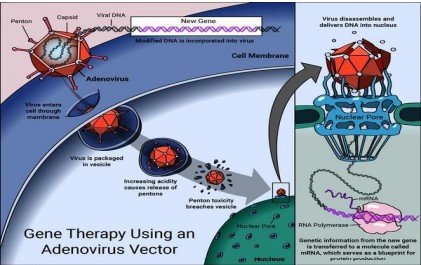

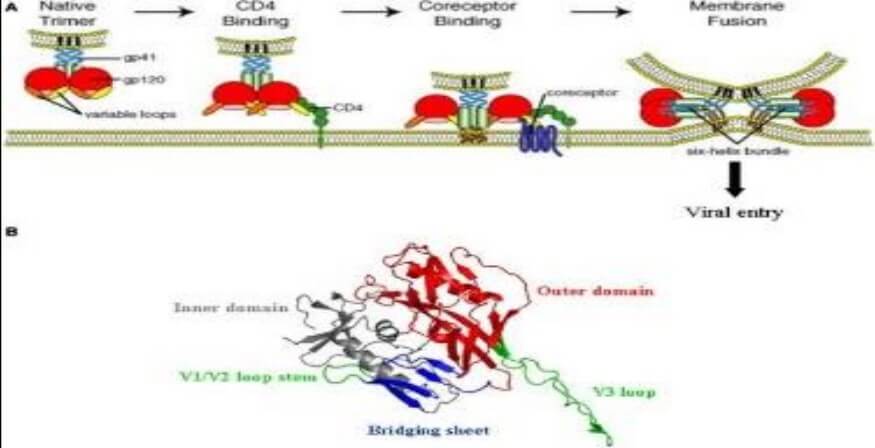

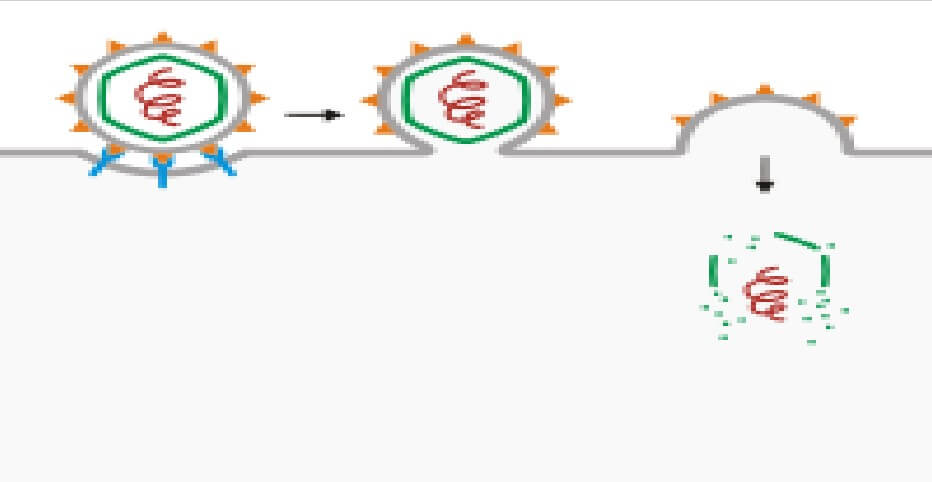

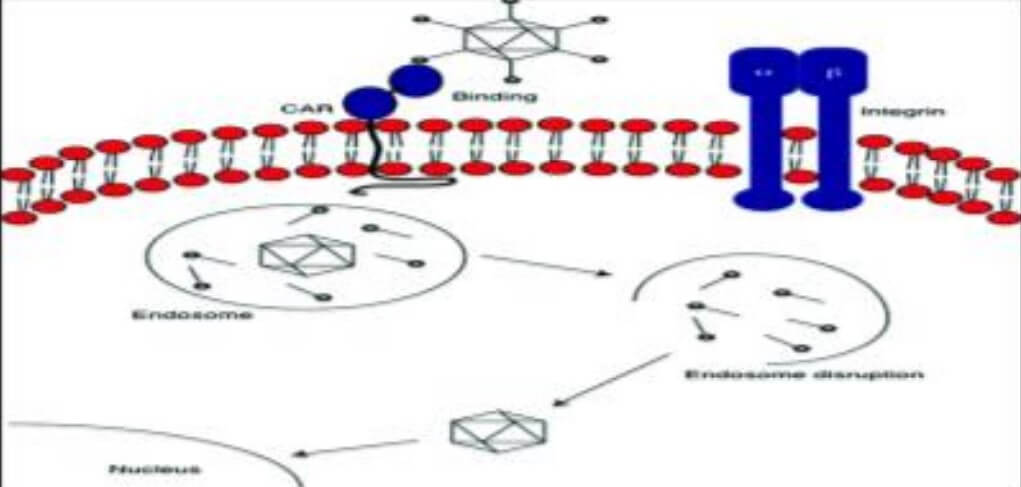

Identification of specific molecular and anatomic targets

is important for the development of gene therapy. In gene

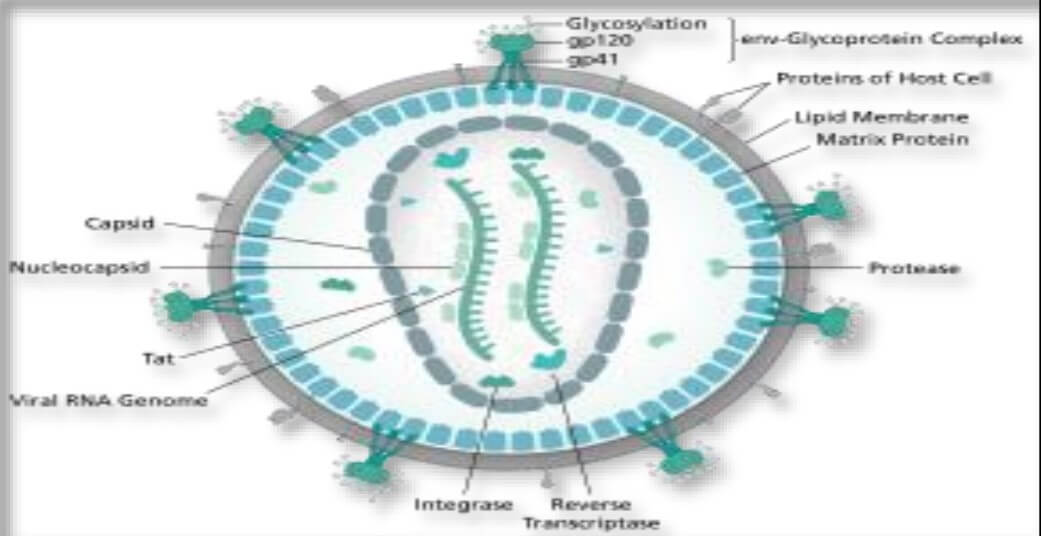

therapy, the vehicles used to transfer genes to the

neurons in the brain are modified viruses, called viral

vectors. Viruses have the ability to transfer their

genetic material to the target cells. That enables viral

vectors to take advantage of that ability. The viral coat

of the vectors is able to deliver a payload with the

therapeutic gene efficiently while decreasing the proteins

or viral genes that might cause replication and spread of

the toxicity or inflammation of the virus.

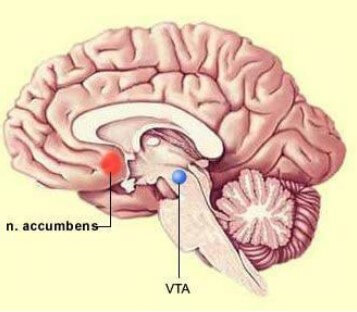

- Depression

- Addiction In 1998, Carlezon et al. showed significant results in using gene transfer techniques to modify drugseeking behavior in mice.

- OCD OCD, also known as obsessive-compulsive disorder, is present in 2% of the Earth’s population. It is characterized by obsessions that lead to anxiety and some behaviors could relieve this anxiety. It is a heterogeneous disorder that cannot be identified by clear biological symptoms or environmental causes. Sapap3 is a protein that is expressed in high levels in the striatum. In rodents, a deficiency of Sapap3 in the lateral striatum produced an OCD-like phenotype. The lateral striatum is a cluster of neurons in the basal ganglia of the forebrain. Sapap3 KO mice exhibited OCD-like behaviors that were alleviated by selective serotonin reuptake inhibitor treatment.

VIII. Gene therapy and p11 protein in treating Depression /Bipolar

- Bipolar disorder and genetics Bipolar disorder is a serious psychiatric disorder that is also known to have a strong genetic component. Adoption studies, segregation analyses, and twin studies have shown that the possibility of developing bipolar disorder, especially BP-1, is sometimes very high due to genetic factors. The identification of a specific gene that causes the bipolar disorder is difficult because it’s not related to mendelian genetics, so it is more complex. Genome-Wide Association or GWA have started using dense SNP maps, also known as Single-nucleotide polymorphism, to study bipolar disorder. SNP mapping is the most dependable way to map genes because it is very dense. Baum et al used a two-stage strategy, he started with 461 bipolar cases and 563 controls, and he showed significant findings in a sample of 772 bipolar cases and 876 controls and found evidence for novel genes linked with bipolar disorder, including a gene for diacylglycerol kinase, which plays a main role in the lithium sensitive phosphatidylinositol pathway.

- What is p11 protein? P11 is a kind of protein encoded by the gene S100A10. Its function is the intercellular trafficking of the transmembrane protein to the cell surface. Researchers found out that mice with a decreased level of p11 protein in their brains displayed a depression-like phenotype.

- Sucrose preference test and anhedonia in mice Anhedonia is the inability to experience joy from enjoyable activities and it is a symptom of depression. Sucrose preference test or SPT is a protocol used to measure anhedonia in mice. It was the reason why scientists were able to diagnose mice with depression. Therefore, they tested the injection of a viral vector containing p11 protein to cure depression. Rodents are known to naturally prefer sweet solutions when given a two-bottle free-choice regimen with access to both sucrose solution and regular water. However, when they experienced depression, they did not show a preference for the sucrose solution.

IX. Gene therapy pros and cons

Currently, gene therapy research has been ongoing for

decades. Researchers say that it could be used to treat

various diseases. However, they had to dive more into it

to discover its pros and cons.

Gene therapy is sometimes better than other treatments

because it has many advantages. For instance, its effects

are long-lasting as the defective gene is replaced by a

healthy one. Therefore, that healthy gene is the one that

will be transferred to the offspring. Furthermore,

germline gene therapy can be used to replace incurable

diseases’ genes in gametes. That results in eradicating

genetic diseases such as Parkinson’s disease, Huntington’s

disease, and Alzheimer’s disease.

Conversely, gene therapy has several cons. For instance,

it can go wrong because it is still under research. The

immune system response can lead to inflammation or failure

of the organ. In 1999, a clinical trial was conducted at

the University of Pennsylvania on an 18-year-old man, who

died at the end. In the clinical trial, the Ad5 vector was

used to deliver the gene for ornithine decarboxylase, a

deficient hepatic enzyme. An investigation by the

university showed that the man died due to a massive

immune reaction.

X. Enduring with gene therapy is tough because it has many Ethical obstacles

- Gene therapy: A double-edged sword We must recognize that the power of gene therapy is not limited to the cure or prevention of genetic diseases. Many people support technologies that can avoid the birth of a child with a genetic disorder such as Tay Sachs disease, Down syndrome, or Huntington’s disease, although this technology might result in aborting established pregnancies. Questions about gene therapy have gone beyond its ability to cure some defects. Some difficult questions became broadly included such as:

- What kind of traits will an infant have?

- How much will economic forces affect the hiring or insuring of individuals who are genetically at risk of having costly diseases?

- How much data can be secured if an individual’s genetic information is stored on computer disks?

- Controversy against gene therapy Critics assert that in a world that values physical beauty and intelligence, gene therapy may be exploited in a eugenics movement that promotes perfection. People with mental retardation will not be allowed to reproduce. That could lead to discrimination. The genetic profiles may be known by potential members of society which will result in the lack of privacy. Moreover, with the world valuing strengths, this could make special capabilities necessary for a person to be an active member of society. As a result, high standards of physical beauty, intelligence, and capabilities will be put which will result in inequality.

- Justice in the distribution of gene therapy If gene therapy is shown to be effective and safe in curing diseases, the rich will monopolize the treatment. Additionally, it might be used for various reasons, other than just correcting genes, such as controlling the traits and gender of an infant. The presence of special capabilities in a human would be normalized which will lead to the preference of rich people with perfect modified genes. On the other hand, middle and low-income families will be under pressure to achieve perfection and that will make them oppressed. In addition, they will not have the opportunity to be treated with gene therapy because it is going to be highly expensive.

XI. Conclusion

As genetic diseases are increasing rapidly and may result in chronic health issues, gene therapy would be one of the most promising medications. In addition to its significant success in curing diseases such as leukemia, heart diseases, and diabetes, it was discovered that it could contribute to treating psychiatric disorders. Various psychiatric disorders were noticed to have a major genetic component. As a result, research has been ongoing to find whether gene therapy was scientifically appropriate to treat psychiatric disorders or not. Several experiments have been conducted on mice to measure the therapy’s efficiency. Fortunately, these experiments have shown promise. As the research has demonstrated, gene therapy has numerous merits that can benefit humankind. Nevertheless, it has many disadvantages that can result from the reaction of the immune system. Additionally, critics affirm that the therapy could go beyond correcting genetic defects. So, ethical issues might emerge as genetic information will not be secured and standards of special capabilities will be put. That can prevent many people from being active members of society. To conclude, gene therapy is a double-edged weapon, however, further research on it will contribute to the eradication of many serious diseases.

XII. References

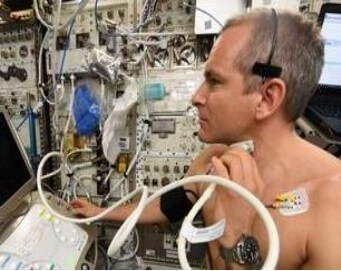

Cardiovascular impacts with astronauts on Mars

Abstract For centuries, humans desired to learn and explore outer space, leave this planet, and travel for many years. In recent decades, many steps have been taken in the right direction. As a result, we have successfully conquered outer space. However, the beginning was such a difficult step; as a new frontier is developed, new problems loom over the horizon. Many issues began to arise to oppose the projects of space exploration. It cannot be said that all the problems are associated with the space itself but rather with humans. Because it is related to humans and their health on other planets, medical issues remain the most significant and most severe globally. Just one of these issues is the cardiovascular system challenge. This research is going to delve deeper into the potential risks of the cardiovascular system and how it affects human life on other planets. Furthermore, some helpful suggestions for resolving the issue are provided.

I. Introduction

Africa was the origin of humanity. Nevertheless, we did

not all remain there—our forefathers travelled all across

the continent for hundreds of years before leaving. Why?

We probably glance up to Mars and wonder, "What is up

there?" for the same reason. Are we able to travel there?

Perhaps we could

II. Risks to the cardiovascular system during space flight assessment

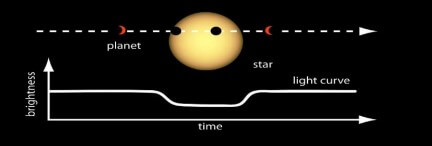

- Diminished cardiac function Experiments in orbit have shown that when astronauts return to Earth, their stroke volume is considerably reduced

- Impaired cardiovascular autonomic functions After microgravity exposure, autonomicallymediated baroreflex systems that regulate cardiac chronotropic responses and peripheral vascular resistance may adapt, resulting in insufficient blood pressure regulation. Hypo adrenergic responsiveness has been proposed as a contributing factor to postflight orthostatic intolerance, as demonstrated by a link between low blood norepinephrine and lowered vascular resistance in astronauts before they syncope

- Impaired cardiovascular response to orthostatic stress Since the U.S. Gemini program

- Impaired cardiovascular response to exercise stress Human individuals subjected to ground microgravity simulations have shown a substantial decrease in aerobic capacity in many tests

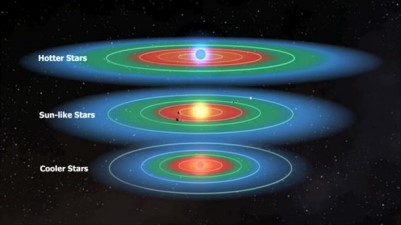

III. Cardiac output to Mars

Gravity affects several components of the circulatory system, including the heart. On Earth, the veins in our legs, for example, struggle against gravity to return blood to the heart. The heart and blood arteries, on the other hand, change in the absence of gravity, and the longer the flight, the more severe the changes.

- With micro-gravity, the size and form of the heart, for example, alters, and the mass of the right and left ventricles decreases. This could be due to changes in myocardial mass and a decrease in fluid volume (blood). In space, the human heart rate (number of beats per minute) is also lower than on Earth. In fact, it has been discovered that the heart rate of astronauts standing erect on the International Space Station is similar to that of those resting down on Earth before takeoff. In space, blood pressure is also lower than on Earth.

-

The heart's cardiac output — the volume of blood pumped

out each minute – diminishes in space as well. There is

also a redistribution of blood in the absence of gravity:

more blood stays in the legs and less blood returns to the

heart, resulting in less blood being pumped out of the

heart. Reduced blood supply to the lower limbs is also a

result of muscle atrophy. Because of the lower blood flow

to the muscles and the reduction of muscular mass, aerobic

capacity is impacted.

[20]

- Cardiovascular Health in Micro-gravity

IV. Experiment

The radiation and low gravity of space also have an impact on the body’s vascular system, causing circulatory problems for astronauts when they return to Earth and an increased risk of heart attack later in life:

Grenon grew the cells and placed them in an environment

that approximated a very low gravity environment. She

discovered that a lack of gravity causes a decrease in the

expression of specific genes within the cells that affect

plaque adhesion to the vessel wall. While the effects of

these changes aren't entirely evident, it's known that a

lack of gravity has an impact on molecular features.

Furthermore, previous research by Grenon revealed that

micro-gravity causes changes among the cells that regulate

energy flow within the heart, potentially putting

astronauts at risk for cardiac arrhythmia.

In 2016, Schrepfer looked into the vascular architecture

of mice who had spent time on the International Space

Station, as well as vascular cells produced in

micro-gravity on Earth. Her team is still analyzing their

findings, but it appears that the carotid artery

partitions have thinned in mice in the location, maybe due

to the lower blood pressure required for circulation due

to the lower gravity.

The researchers also discovered that the aesthetic cells

showed changes in gene expression and control that were

similar to those seen in patients with cardiovascular

disease on Earth.While those changes aren't harmful in the

microgravity of the Space Station, they have a deleterious

impact on blood circulation on Earth.When astronauts

return to Earth's gravity, muscle weakness is just one of

the reasons they can't get up, according to Schrepfer.

“They also don't get enough blood to their brain since

their vascular function is impaired,” says the

researcher.

There is reason to be optimistic: Schrepfer and her

colleagues have discovered a tiny chemical that prevents

the weakening of vascular partitions in mice. She and her

team are planning to conduct protection experiments on

people on the inside in the near future.

- Calculations attempts on mars The floor gravity of Mars is simply 38% that of Earth. Although micro-gravity is understood to purpose fitness issues such as muscle loss and bone demineralization, it isn't always recognized if Martian gravity might have a comparable impact. The Mars Gravity Bio-satellite turned into a proposed task designed to examine extra approximately what impact Mars' decrease floor gravity might have on humans, however it turned canceled because of a loss of funding. Due to the shortage of a magnetosphere, sun particle events and cosmic rays can without difficulty attain the Martian floor. - Mars affords adversarial surroundings for human habitation. Different technology had been evolved to help long-time period area exploration and can be tailored for habitation on Mars. The current file for the longest consecutive area flight is 438 days via way of means of cosmonaut Valeri Polyakov, and the maximum amassed time in the area is 878 days via way of means of Gennady Padalka. The longest time spent outdoor the safety of the Earth's Van Allen radiation belt is ready 12 days for the Apollo 17 moon landing. This is minor in assessment to the 1100-day adventure deliberate via way of means of NASA as early because the 12 months 2028. Scientists have additionally hypothesized that many one-of-a-kind organic features may be negatively laid low with the surroundings of Mars colonies. Due to better tiers of radiation, there may be a large number of bodily side-consequences that have to be mitigated. In addition, Martian soil consists of excessive tiers of pollutants which can be risky to human fitness. - The distinction in gravity might negatively affect human fitness via way of means of weakening bones and muscles. There is likewise the chance of osteoporosis and cardiovascular issues. Current rotations at the International Space Station positioned astronauts in 0 gravity for 6 months, a similar period of time to a one-manner experience to Mars. This offers researchers the capacity to higher recognize the bodily country that astronauts going to Mars might arrive in. Once on Mars, floor gravity is simplest 38% of that on Earth. Micro-gravity impacts the cardiovascular, musculoskeletal, and neuronvestibule (critical nervous) systems. The cardiovascular consequences are complex. On earth, blood with-inside the frame remains 70% low the heart, and in micro-gravity, this isn't always the case because of not anything pulling the blood down. This will have numerous bad consequences. Once moving into micro-gravity, the blood stress with-inside the decrease frame and legs is drastically reduced. This reasons legs to come to be vulnerable via lack of muscle and bone mass. Astronauts display symptoms and symptoms of a puffy face and chook legs syndrome. After the primary day of re-access returned to earth, blood samples confirmed a 17% lack of blood plasma, which contributed to a decline of erythropoietin secretion. citations and a lot of references to your claims.

V. Pharmacological countermeasures

After such extensive experiments, both in space and on

the ground, it was determined that physical therapy should

target plasma or blood expansion, autonomic dysfunction,

and impaired vascular reactivity. This can assist in the

identification of the most appropriate countermeasures for

orthostatic and physical work performance protection.

Several practices included using agents such as

Fludrocortisone or any electrolyte-containing beverages

that can increase the amount of blood circulating

throughout the body. In more detail, betaadrenergic

blockers can be used to reduce the degree of cardiac

mechanoreceptor activation or to inhibit epinephrine's

peripheral vasodilatory effects. Furthermore, by

inhibiting parasympathetic activity, disopyramide can be

used to avoid vasovagal responses. Finally,

alpha-adrenergic agonists such as ephedrine, etilephrine,

or midodrine are used to increase venous tone and return

while also increasing peripheral vascular resistance

through arteriolar constriction.

VI. Conclusion

Finally, it can be said that traveling into space is now easier than ever, but the challenge now is determining how to achieve the best results and keeping track of self-health during space flights. As seen, medical issues arising from the cardiovascular system are critical due to their vitality in keeping the astronauts alive during their missions. We have seen how differences in gravity can lead to fundamental problems in space, such as changes in the size and structure of the heart muscle, fluid changes, and a drop in blood pressure. In addition to several possible outcomes, an experiment was shown to demonstrate how radiation and low gravity in space can have an impact on the human body, as well as issues with the cardiovascular system. Finally, using gravity calculations between the Earth and Mars, the scientific idea behind the medical problems is clearly proven. This reveals the mysteries underlying those phenomena.

VII. References

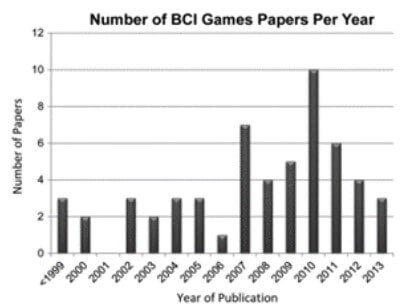

BCI based games and Psychiatric disorders

Abstract As modern computers technology developed to understand human brain signals, it’s great to use a computer system as an output of brain signals. This developed technology is brain-computer interface (BCI).A brain-computer interface, sometimes called a brain-machine interface or a direct neural interface, is a hardware and software communications system that allows disabled people a direct communication pathway between a brain and an external device system rather than the normal output through muscles. After experimentation, three types of BCI have been developed which are Invasive BCIs, semi-invasive BCIs, and Non-invasive BCIs that differ in their implementation place of the brain. BCI is used in different applications such as gaming applications that provide these disabled people with entertainment depending on their brain signals as well as its use in the medical field and the bioengineering one. In addition, its usage as neurofeedback therapy contributing to the treatment of psychiatric conditions such as ADHD and anxiety.

I. Introduction

Attention deficit hyperactivity disorder (ADHD) is a

neurobiological disorder, characterized by symptoms of

inattention, overactivity, and impulsivity

II. An overview of BCI

III. Loop and components

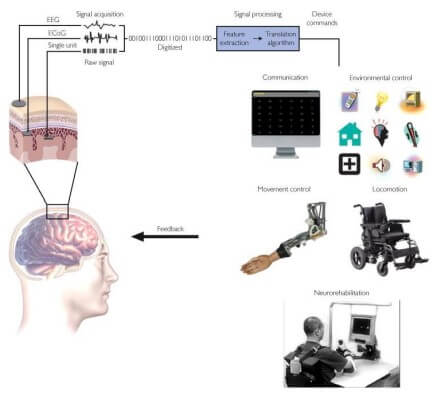

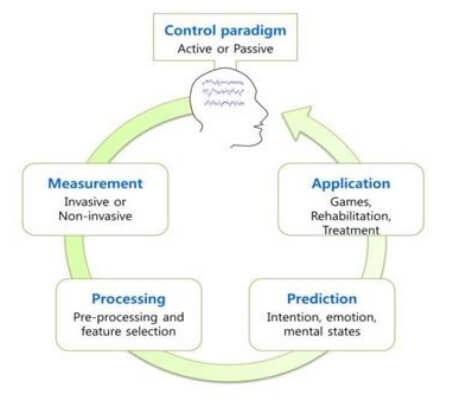

The following are the specifics of the BCI elements: Control paradigm: At this stage, the user can transfer data to the system by pressing a button with the appropriate function, or by moving the mouse in traditional interfaces. However, BCI necessitates the development of a "control model" for which the user can be held accountable. For example, the user may imagine moving a part of the body or focusing on a specific object to generate brain signals that include the user's intent. Some BCI systems may not require intended user efforts; instead, the system detects the user's mental or emotional states automatically. They are classified as active, reactive, or passive approaches in terms of interaction. Measurement: Brain signals can be measured in two ways: invasively and non-invasively. Invasive methods, such as electrocorticogram (ECoG), single microelectrode (ME), or micro-electrode arrays (MEA), detect signals on or inside the brain, ensuring relatively good signal quality. However, these procedures necessitate surgery and carry numerous risks; thus, invasive methods are clearly not appropriate for healthy people. As a result, significant BCI research has been conducted using non-invasive methods such as EEG, magnetoencephalography (MEG), functional magnetic resonance imaging (fMRI), and NearInfrared Spectroscopy (NIRS), among others. EEG is the most widely used of these techniques. EEG is inexpensive and portable when compared to other measuring devices; additionally, wireless EEG devices are now available on the market at reasonable prices. As a result, EEG is the most preferred and promising measurement method for use in BCI games. Processing: The measured brain signals are processed to maximize the signal-to-noise ratio and select target features. Various algorithms, such as spectral and spatial filtering, are used in this step to reduce artifacts and extract informative features. The target features that have been chosen are used as inputs for classification or regression modules. Prediction: This step makes a decision about the user's intention or quantifies the user's emotional and mental states. Classifiers such as threshold, linear discriminant analysis, support vector machine, and artificial neural network are commonly used for prediction. Application: After determining the user's intent in the prediction step, the output is used to change the application environment, such as games, rehabilitation, or treatment regimens for attention deficit hyperactivity disorder. Finally, the user is given the predicted change in the application as a response.

IV. BCI Applications

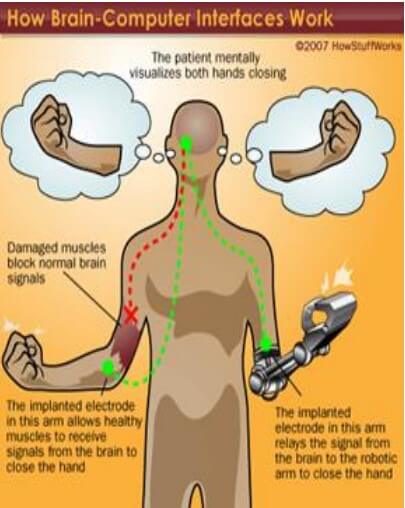

- Bioengineering Brain-computer interfaces have the potential to enable patients with severe neurological disabilities to re-enter society through communication and prosthetic devices that control the environment as well as the ability to move within that environment as shown in figure (5)

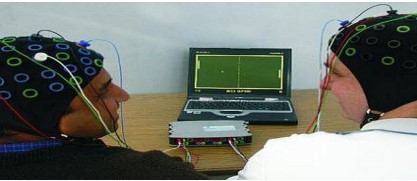

- Gaming In that hectic life, the most important interest is work and maybe family which increases boredom. So, what thoughts about disabled people without even work or entertainment? Some researchers have focused recently on the application of BCI to games for use by healthy people. Studies have demonstrated examples of BCI applications in such well-known games like “Pacman” , “Tetris”, and “World of Warcraft”, as well as new customized games, such as “MindBalance” , “Bacteria Hunt” , and others

- Medical The spectrum use of BCI for control is very wide and includes different applications such as neural prostheses, wheelchairs, home environments, humanoid robots and much more. Another exciting clinical application of BCIs focuses on facilitating the recovery of motor function after a stroke or spinal cord injury.

- Neuroscience research Neuro-technology and Neurosciences have been continuously advancing, as a result society, individuals, and healthcare professors had to deal with this advancement, BCI seems to be an emerging technology in Neurosciences. Also it is known that BCI technology is providing such a direct relation between our brains and the external devices by passing the neuromuscular pathways

V. Psychiatric disorders

Mental illness or psychiatric disorders are mainly a wide range of health conditions or neurological disorders that can affect your mood, thinking, and even your behavior. It doesn’t mean that people having mental health concerns from time to time have a mental disorder, but a mental health concern is said to be a mental disorder in case of ongoing symptoms that affect the person’s ability to function. A variety of environmental and genetic factors are the main cause of Psychiatric disorders, this includes:- The brain chemistry This happens when the neural networks involving the brain chemicals (that carry signals to different parts of the body and brain) are impaired, the function of nerve systems and receptors changes causing depression and other mental issues.

- Inherited traits Many genes are capable of increasing the risk of developing mental disorders.

- Environmental exposures before birth Exposure to some conditions, toxins, drugs, or Alcohol can sometimes be linked to Psychiatric disorders. Examples of Psychiatric disorders include ADHD, Autism, addictive behaviors, eating disorders, schizophrenia, anxiety disorders, and depression.

- Medication Although medications don’t cure, they just improve symptoms

- Psychotherapy Also, they are called talk therapy, involves talking with a therapist about mental issues and conditions. Psychotherapy in most cases is completed successfully in just a few months, but in many cases, life-long treatment is needed.

- Brain-stimulation treatments They are usually used for treating depression, they include electroconvulsive therapy, vague nerve stimulation, repetitive transracial, deep brain stimulation, and magnetic stimulation.

VI. ADHD treatment using BCI based games

To treat ADHD using BCI based games, an experiment was

conducted in 2015 to study a BCI system that mainly uses

steady state potentials, such that their main target is to

improve the attention levels of people suffering from ADHD

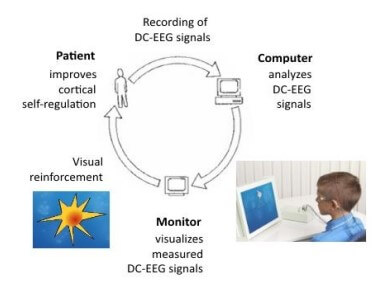

VII. BCI game therapy

Neurofeedback or electroencephalography (EEG) according

to its medical term is a kind of biofeedback, process to

learn how to change physiological activity for the

purposes of performance and improving health, that

measures brain waves and body temperature in a

non-invasive way where it changes and normalizes the speed

of specific brain waves in specific brain areas to treat

different psychiatric disturbances like ADHD and

anxiety.

So, it teaches self-control of brain functions by

measuring the brain waves then giving feedback represented

in audio or video. The produced feedback depends on the

susceptibility and desire for the brain activities where

it's positive or negative of the brain activities are

desirable or undesirable, respectively.

Neurofeedback is adjuvant therapy for psychiatric

conditions such as attention deficit hyperactivity

disorder (ADHD), Generalized anxiety disorder (GAD), and

phobic disorder

The treatment starts by mapping out the brain through

quantitative EEG to identify what areas of the brain are

out of alignment. Then EEG sensors are placed on the

targeted areas of your head where brain waves are

recorded, amplified, and sent to a computer system to

process the produced signals and give the proper feedback.

Then that brain current state is compared to what it

should be doing

VIII. Conclusion

The Neurofeedback (NFB) therapy technique provides the user with real-time feedback on brainwave activity. That activity is measured by sensors in the form of a video display and sound. Brain-Computer Interfaces (BCI) framework consists of five stages that form a closed loop. BCI helps patients with neurological disabilities to re-communicate by prosthetic devices. BCI also provides disabled people through amputated organs with the entertainment they need; as they mostly feel bored. Gaming BCI allowed these people to get entertained through games that don’t need effort, only brain signals. Psychiatric disorders are mental health issues that can affect your mood and actions. A variety of things are capable of causing these disorders. Inherited traits & environmental exposures can be reasons for psychiatric disorders. Psychotherapy is one of the ways to get rid of mental health issues. In most cases, psychotherapy is completed successfully in a few months, but in many cases, a life-long treatment is needed. Brain-stimulation treatment can be used to get rid of depression, but as it is the age of technology, BCI is now capable of getting rid of those disorders. Depression, substance abuse, anxiety, and mood instability; all these are disorders that NFB has shown effectiveness in. There is proof that supports the idea that NFB decreases seizures. Some proof also supports the effectiveness of NFB for ADHD.

IX. References

Artificial consciousness: from AI to conscious machines

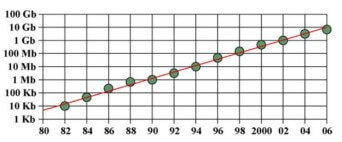

Abstract Consciousness is a subjective (implicit) experience. Artificial consciousness aims to simulate this consciousness. This is by building a model as complex as a human brain. Any model less complex than the brain will not be able to simulate the human brain nor a part of it. Building a subject is one of the biggest difficulties because scientists till now don’t know what specifically a subject is. Consequently, it is impossible to build something you don’t know it. Many attempts tried to build machines able to do tasks with the same proficiency as humans. Many attempts succeeded as deep blue that beat the chess world champion Garry Kasparov, but this didn’t reach human consciousness yet. It just follows specific complex commands. This category of machines lacks emotions, love, creativity, desire, and curiosity. Now, scientists try to model the brain by RAM which every neural connection (synapse) equals a floating-point number that requires 4 bytes of memory to be represented in a computer. The brain contains 1015 synapses that equal 4 million GBs of RAM. This memory is not available on a computer till now. It is predicted that it will be available near 2029. This idea may fail for any reason, but all researchers, scientists, and technologists believe that artificial consciousness will become a reality someday even in the far future.

I. Introduction

- What is Consciousness? Consciousness is one of the most mysterious scientific concepts. Scientists till now discover more about the methodology of human consciousness. Consciousness is everything you experience and everything you feel, which are sometimes named qualia. Many modern philosophers believe that this is just an illusion as they believe should be a meaningless universe of matter and void

- The rise of Artificial Consciousness accompanied by Artificial Intelligence The rise of AI especially and technology generally in the 20th century caused the foundation of a new field related to AI which is Artificial Consciousness (AC). The idea of the universal effective AI model, which is creating machines as have all human aspects, is the reason that scientists created a new field

-

Weak artificial consciousness: It is a simulation of

conscious behavior. implementation of a smart program

that simulates the behaviors of a conscious being at a

primary level of technology and AI, without

understanding the mechanisms that generate consciousness

[8] . Something like a primary model. -

Strong artificial consciousness: It refers to real

conscious thinking emerging from a complex computing

machine (artificial brain). In this case, the main

difference with respect to the natural counterpart

depends on the hardware that generates the process

[8] .

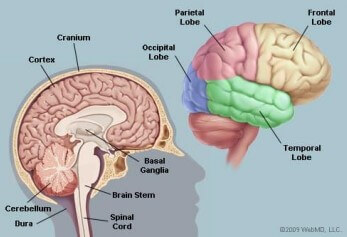

II. Consciousness, biological process, or psychological concept:

Consciousness is a subjective experience. What “it is

like” to perceive a scene, to endure pain, to entertain a

thought, or to reflect on the experience itself. When

consciousness fades, as it does in dreamless sleep, from

the intrinsic perspective of the experiencing subject, the

entire world vanishes. Consciousness mainly depends on the

integrity of certain brain regions and the particular

content of an experience depends on the activity of

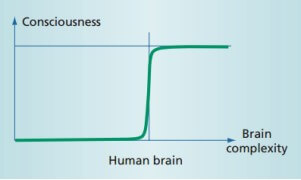

neurons in parts of the cerebral cortex (look at fig 1

- (1) It has sometimes been defined as a state, as in drowsy, alert or altered states of consciousness.

- (2) It has also been used to refer to an architectural concept, namely the executive system at the center of cognition that seems to receive input, allocate attention, set priorities, generate imagery, and initiate recall from memory.

- (3) It may be used as an indicator of representational awareness, as in becoming conscious of some specific idea or event.

III. AC Origin

- The origin of the term “AC” Engineers are in always attempt to design something, which could not be defined precisely. They aimed at building artificial replicas which imitated some features of something, real or virtual, that elicited their imagination

- AC tech discipline Artificial consciousness is a technological area closer to robotics and AI technical fields. It is not surprisingly a scientific discipline and has a limited relation to psychology or neurosciences. Nevertheless, in the future, artificial consciousness could give unexpected contributions to the understanding of the study of the human mind because it is a reliable testbed for checking theories and hypotheses. Artificial consciousness is perfectly described as “epigenetic robotics” both disciplines stress the role of development. However, artificial consciousness leaves the implementation of the sensory-motor-cognitive system to epigenetic robotics. In simpler terms, AC is addressing the issue of the robot with the external environment. because artificial consciousness sits on two giants’ shoulders (neurosciences and artificial intelligence), researchers are not seeking to make a confusing use of linguistic terms, Today, the term ‘artificial consciousness’ has a pure technological meaning. Researchers often use consciousness in its folk psychology and everyday meaning. The researchers in the field of artificial consciousness know well that the study of natural consciousness is far from being conclusive

- They’ll have the delicate emotional cues, which convince us today that humans are conscious.

- They will be able to make other humans feel contradictory feelings.

- They’ll get mad if others don’t obey their claims.

- From AI to AC “Mind cannot be demonstrated as identical to brain activity” an equivalence that Bennett and Hacker regarded as a metrological fallacy

IV. AC technical development and difficulties

- How to verify consciousness (Turing test)? In 1950, Alan Turing, an English mathematician, computer scientist, logician, philosopher, and theoretical biologist, tried to answer one of the most ambiguous questions at this time “can computers think?” Turing considered the machines as digital computers only and operationalized thinking as the ability to answer questions in a particular context. The test is to ask a question for a computer and the same question for a human operator. Both answer it on a keyboard for 5 minutes. The answer should be well enough that the interrogator could not easily discriminate between the human and computer. The examiner inputs a question about anything that comes to his mind. Both the computer and the human respond to each question. If the examiner cannot with confidence distinguish between the computer and the operator based on the nature of their answers, we must conclude that the machine has passed the Turing test

- Development of Turing test In 1998, the questioning’s scope has been wider and nearly include anything. Each judge selects a score on a scale of 1 to 10. 1 means human and 10 means computers. Now, current computers can pass the Turing test (pass here means confidence distinguish between the computer and human) in case presence of restrictions to interact to highly specific topics as chess. So far, no computer has given responses indistinguishable from a human, but every year the computer’s scores edge closer to an average of 5. The possibility of building a device that will pass the human Turing test, at least in the far future, is not ruled out yet

- Computer beats human On 11 May 1997 at 3:00 P.M. in New York City, for the first time in the history, a computer beat reigning world chess champion, Garry Kasparov. It was IBM’s Deep Blue. It is estimated that the search space in a chess game includes about 10,120 possible positions. Deep Blue could analyze 200 million positions per second. Deep Blue victory can be explained by its speed combined with a smart search algorithm, able to account for positional advantage. In other words, computer superiority was due to brute force, rather than sophisticated machine intelligence. The conflict here is whether this means that Deep blue is conscious or not

- Difference between subject or object: Manufacturers work on building sophisticated robots called epigenetic robots. They aim to reach unique personalities for robots through the interaction with the environment and make them capable of going through a series of development phases of a normal human (from toddler to adult). This idea appeals to consumers. Moreover, robots must show emotions like happiness, anger, surprise, and sadness, in different degrees. Those robots must be curious and able to explore the external world on their own: these robots develop concerning their personal history. However, designing and implementation of robots capable of having a subjective experience of what happens to them are not achieved yet. The recent research on consciousness is focused on the design of conscious machines. The time has come to elevate from behavior-based robots to conscious robots. before any new design approach towards a new generation of artificial beings, engineers have to deal with a new problem: how to build a subject? engineers didn’t use to build subjects before

- Is it possible the machines could be conscious? In 1980, the philosopher John Searle presented his proof that machines could not possibly think or understand. The reason is that computers do human tasks but in an unintelligent manner. He believes that no matter how good the performance of the program if it can’t think and understand

V. AC future

- When will a machine become self-aware?

VI. Conclusion

Consciousness refers to your personal perception of your unique thoughts, memories, feelings, and environments. Essentially, your consciousness is your awareness of yourself and the world around you. This awareness is subjective and unique. From a neurological perspective, Science is still exploring the neural basis of consciousness. But even if we have a complete neuroscience picture of how the brain works or performs, many philosophers still believe that there is still a problem they call the "Consciousness Problem." The brain is the most complex organ in the entire universe as we know it. It has about 100 billion neurons. It has more neural connections than there are stars in the entire universe. This is why we are incredible beings who have a spark of consciousness. A popular discussed approach to achieve general intelligent models that can be conscious is whole depending on reaching a “brain simulation”. The low-level brain model is created by scanning and mapping the biological brain in detail and copying its state to a computer system or other computing device. Eventually, it is possible to indicate a condition that must happen to consider a machine as self-aware or conscious. A neural network must be at least as complex as the human brain because less complex brains are not able to produce conscious thoughts. Actually, it will not produce any conscious thoughts. Scientists and technical now work on building a model as complex as the human brain. It is just a prediction. However, they believe that AC will reach human consciousness in 2029. Even this attempt failed, AC will reach human consciousness even in the far future.

VII. References

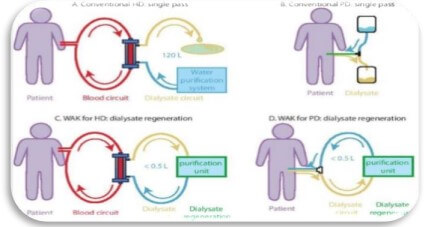

Dialysis Machine: Effect of Technological Advancements

Abstract Eight decades ago, the first artificial blood purifier was invented. Today, in a world where spending twelve hours a week in treatment is not a viable option to many patients, the same bulky machines are still used. Fortunately, scientists have been vigilant, and the notion of developing a portable, reliable dialysis machine has been sought by many. In this paper, we first begin by analyzing the principle of Dialysis. Then we shed light on the technological innovations achieved ever since the first dialysis machine was mass-produced. The use of a high-flux membrane dialyzer, ultrapure dialysis fluid, and convection fluid has proved to greatly improve Dialysis. However, the difficulties still prevail. And so long as an efficient substitute can be found for the dialysate and proper healthcare can be given to patients at home, a portable dialysis machine is not going to be devised.

I. Introduction

The kidney is arguably one of the most important organs

in the human body because it cleans the human blood from

toxic wastes, so when it loses its renal functions, the

person can be exposed to death. Hence, scientists have

long hoped to invent a machine that simulates the function

of the kidneys to clean human blood. The first successful

machine for human Dialysis was invented and operated in

the 1940s by a Dutch physician called William Kloff. Kloff

came up with the idea of developing a blood purifier when

he saw a patient with kidney failure. Kloff became

interested in the possibility of artificial stimulation of

kidney function to remove toxins from the blood of

patients with uremia, or kidney failure. Although only one

person was successfully treated, Cliff completed

experiments to develop his design

II. The scientific premise behind Dialysis

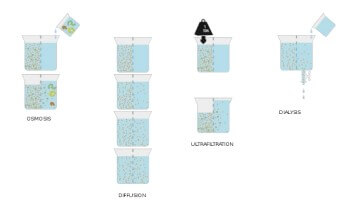

The principles of Dialysis can be tied back to Thomas

Graham’s discovery of diffusion. In his first article on

Gaseous diffusion, Graham proposed that the gaseous flow

was proportional to its density. He examined the escape of

hydrogen via a tiny hole in platinum and observed that

hydrogen molecules were moving out four times more quickly

than oxygen molecules. His tests were designed in such a

way that he could quantify the relative speeds of specific

molecular movements. He also observed that heat enhanced

the speed of these molecular movements while increasing

the force that resisted the atmospheric pressure by a

certain weight of the gas. Graham's numerical calculations

revealed that the velocity of flow was inversely

proportional to the square root of the densities. His law

demonstrated that the specific gravity of gases could be

assessed more precisely than usual. He also remarkably

noted that diffusive gas escapes faster in a compound.

This paved the way for the invention of the dialysis

machine. In Dialysis, Blood flows by one side of a

semi-permeable membrane, and a dialysate, or special

dialysis fluid, flows by the opposite side. A

semipermeable membrane is a thin layer of material that

has holes of different sizes or pores. Smaller solutes and

fluid pass through the membrane, but the membrane blocks

the passage of larger substances. This replicates the

filtering process that occurs in the kidneys when the

blood enters the kidneys, and the larger substances are

separated from the smaller ones in the glomerulus

In general, there are pretreatment systems before

dialysis devices, which deliver a high quality of water

according to appropriate requirements, (primarily reverse

osmosis [RO]). Scientists believe that the malfunctioning

of pretreatment systems and the resultant poor feed water

quality of the dialysis instrument might be related to

some tragic occurrences at dialysis centers. Minimum trace

element concentrations such as heavy metals in Dialysis

can severely disrupt trace element concentration in

individuals with Dialysis. Elements such as aluminum,

nickel, cadmium, plum, and chromium must thus be taken

into account in particular. The rise in nickel level, for

example, may lead to acute nickel poisoning. Aluminum also

causes a disrupted balance of calcium phosphate not just

in dialysis patients, but also in brain and bone

conditions. over a long-term period of periods of time.

Based on the above, reducing heavy metals in water is

highly essential.

III. Technological Innovations in Hemodialysis

i. Online Monitoring Technologies

Dialysis Automation and Profiling has made the process

safer for the patient and the care team, reducing the

un-physiologic incidences of human mistakes. Online

Monitoring relies on the immediate information of

Parameters blood volume (BV), dialysate, conductivity,

urea kinetics, and thermal energy balance. The dialysis

machine uses these measurements to apply automated actions

to achieve the body's standards, such as sodium and

potassium modeling and temperature control which affect

the patient during or after the Dialysis.

ii. Effects of Automated sodium modeling

According to the received measurements, the machines

decide to keep the current concentration or change it; one

of these measurements is the dialysate sodium

concentration. The machine tends to raise the dialysate

sodium concentration to prevent intradialytic hypertension

causing after dialysis vicious harms; Increased thirst,

Intradialytic Weight Gain, and Hypertension; keeping in

mind that fluid retention of ≥ 4 kg between two subsequent

dialysis sessions is associated with a higher risk of

cardiovascular death.

iii. Effects of Automated potassium modeling

An analysis: part of the 4D study has been conducted which shows that a portion of high mortality is sudden death or abnormal cardiac rhythm, where:- Patients without sinus rhythm were 89% more likely to die.

- Cardiovascular events and stroke risk increased by 75% and 164%, respectively compared with preserved sinus rhythm patients.

-

Left ventricular hypertrophy with more than two-fold,

increases the risk of stroke and sudden death incidences

by 60%.

[11]

The sudden shifts in the plasma potassium because of

hemodialysis sessions can cause death in arrhythmia-prone

patients. The lower concentration dialysate potassium is

used to remove the excess potassium, being the necessary

gradient.

iv. Effects of Automated Temperature Modelling

Temperature modeling has been experienced by modifying

dialysate temperature via blood temperature monitoring

integration in the HD machines. The machine adjusts the

dialysate temperature between 34 &35.5◦ c according to the

patient blood temperature of 37◦ c. which results in

cardiovascular stability during the HD treatment better

than the normal dialysate temp.

IV. Purity of Dialysate and Dialysis Water

The water & concentrates used to produce dialysate and

the dialysate are required to meet quality standards to

reduce the injury risk of HD patients due to the chemical

and microbiological contaminants that can be in the

dialysate.

Intact bacteria V.S. Bacterial Products

In the dialysate, there are non-vicious contaminants like intact bacteria that can`t proceed the dialyzer membrane, and vicious bacterial products such as endotoxins, fragments of endotoxin, peptidoglycans, and pieces of bacterial DNA which can cross into the bloodstream causing chronic inflammation due to stimulation on mononuclear cells. The induced inflammatory state may be an essential contributor to the long-term sickness associated with HD.Preparation of Ultra-Pure Water

Studies have shown that tiny fragments of bacterial DNA can maintain a chronic inflammation in HD patients by prolonging the survival of inflammatory mononuclear cells.V. Hemofiltration & Hemodiafiltration

Hemodialysis is based on diffusion; exchanging solutes from one fluid to another through a semipermeable membrane along a concentration gradient. Even HD High-Flux Membranes don`t make a difference in the number of removed solutes because solute diffusivity decreases rapidly with increasing molecular size. Despite that, convection therapies such as Hemofiltration (HF) and Hemodiafiltration (HDF) can remove larger solutes. Convection requires large volumes of substitution fluid which is covered with online ultrafiltration of dialysate and sophisticated volume control systems to maintain fluid balance.

Hemodiafiltration

HDF using a high-flux membrane dialyzer, ultrapure dialysis fluid, and convection fluid is highly efficient. As studies results, the high-efficiency online HDF is associated with a 35% reduced risk for mortality. Also, Regular use of online HDF is associated with reduced morbidity as compared with standard HD.Hemofiltration

A comparative study has been made on High-flux HF with

ultrapure Low-Flux HD, shows a significant survival rate

in HF compared with standard HF (78% V.S. 57% 3yrs

follow-up). The study has demonstrated inclusion and

logistic problems associated with online monitored

Hemofiltration.

VI. Difficulties facing the development of portable dialysis machines

Many obstacles have hindered the development of a smaller dialysis machine let alone a full-fledged wearable artificial kidney. The primary impediment has been the lack of an effective strategy to enable toxin removal without using substantial volumes of dialysate — a limitation that applies to both hemodialysis and peritoneal Dialysis.

- The use of sorbent material. NASA has extensively studied ways to remove organic waste from solutions to restore potable water during manned space travel. These efforts have led to the development of sorbents (materials that absorb other compounds very efficiently), which can also be used to detoxify dialysate solutions. Almost all attempts to develop a wearable artificial kidney to date have incorporated absorbent materials into the dialysate circuit to replenish the dialysate. Sorbents containing activated charcoal are very effective in absorbing heavy metals, oxidants, and some uremic toxins such as uric acid and creatinine However, sorbents have historically proven ineffective in binding and removing urea, which has limited the usefulness of sorbent-based systems.

- decomposition of urea by using enzymes There have been many attempts by scientists to convert the urea compound contained within the dialysate solution to be reused into a compound of ammonia and carbon dioxide using enzymes, then the ammonia is absorbed using a sorbent called zirconium phosphate and the carbon dioxide is disposed of in the atmosphere. However, the combined use of sorbents and the enzymatic decomposition of urea are being tested by scientists and are under study.

- Electro-oxidation This method also dates back to early NASA investigations of using electrooxidation to electrolyze urea into carbon dioxide and nitrogen gas on metal-containing electrodes. After that, these gases are excreted into the atmosphere. But since urea is an acid, this can lead to the corrosion of the metal, so work must be done to develop this method. Even if dialysis machines were to be reduced in size, there are many problems that patients with kidney failure will face. For example, health care, where patients in the hospital are safe next to the doctors and nurses, but if the Dialysis becomes mobile far from the hospital, there will be no strong health care, and a patient on portable Dialysis will not have access to a caregiver in the event of machine failure or exsanguination due to vascular disconnection.

VII. Conclusion

It cannot be denied that impressive technological innovations in the field of Dialysis have been introduced in the past few decades from the first machine has been invented until now. However, the translation of these technical achievements into hard clinical outcomes is more difficult to demonstrate but some innovations really had helped dialysis be better. Despite that, it is unlikely that any of the innovations will be used in the next few years as there aren`t enough studies that ensure the long-term safety of patients. Furthermore, the need for a caregiver at disposal will remain a must if an artificial kidney were to be introduced.

VIII. References

Evaluating the effectiveness of smart nanomaterials in Nanodrug Delivery Systems

Abstract Nanodrug delivery systems (NDDSs) are drug delivery systems made of materials on the nanoscale which encapsulate active compounds which aim to treat certain conditions. They can be made of many different materials; however, hydrogels, polymeric nanoparticles, and carbon nanotubes have become one of the more prominent NDDSs in recent years. Each NDDS has properties specific to its material. This literature review will seek to establish which NDDS has the best abilities in terms of some specific general properties which can be observed in all DDSs, with the focus on hydrogels, polymeric nanoparticles, and carbon nanotubes. After analyzing the data and properties of each of the three materials, we found each one surpasses the others in one property that makes it unique. Thus, determining which of these specific NDDSs is the most effective in general is difficult, and they should be chosen based on what they would be utilized for in a specific circumstance.